Looking ahead: Today, Arm announced the Arm AGI CPU, the first production silicon product designed and sold directly by Arm in the company's 35-year history. This isn't an IP license and it's not a compute 'reference' design. It's a finished chip, manufactured by TSMC on 3nm, built for AI data centers and aimed squarely at agentic AI workloads.

For the past three years, every data center conversation has started and ended with GPUs. Training clusters and inference racks and accelerator roadmaps. If you worked in data center silicon and you were not talking about GPUs, people looked at you like you were lost.

Ryan Shrout is a longtime technology analyst and industry veteran who has spent over two decades covering PC hardware, graphics, and semiconductors. He previously led technical marketing at Intel and was the founding editor of PC Perspective. He is currently President and GM at Signal65. You can follow him on X @ryanshrout.

I know the feeling. I spent years at Intel leading data center marketing, trying to make the case that Xeon CPUs had a meaningful role in AI workloads. The response was polite but dismissive. AI was a GPU problem. The CPU was a host processor, a necessary tax on the system, not the point of the system.

Turns out that argument was just ahead of its time.

The introduction of Arm's AGI CPU matters for reasons that go well beyond one product launch. Agentic AI is structurally changing the CPU-to-GPU ratio in data center infrastructure, and the industry is only beginning to grapple with what that means.

The CPU renaissance nobody expected

Training-era architectures assumed GPUs would dominate every phase of AI. For pure model training and high-throughput batch inference, that assumption holds. But agentic workloads have introduced an entirely different compute profile.

When an agent calls a tool, queries a database, waits for human approval, or orchestrates sub-agents, the GPU is allocated but not active. The CPU handles the work. A Georgia Tech and Intel research paper profiling five representative agentic workloads found that CPU-side tool processing accounts for up to 90.6% of total latency.

– Ryan Shrout (@ryanshrout) March 24, 2026

Production data tells the same story. Anyscale documented that in real-world AI pipelines, CPU-heavy and GPU-heavy stages are often packaged together, leaving GPUs allocated but idle during CPU-bound work. By disaggregating those stages, they achieved an 8x reduction in GPU requirements for the same workload.

And our own Signal65 testing shows that even for host node tasks, the CPU you pick can have a material impact on performance and TCO.

The pattern scales with agent complexity. Simple chatbot Q&A runs 90-95% on GPUs. RAG with retrieval shifts to 50-75% CPU. Multi-agent orchestration runs 60-70% CPU. Tool-heavy agents like SWE-Agent operate at 80-90% CPU. The more reasoning steps, tool calls, and sub-agent coordination in a workflow, the more the compute balance tilts toward the processor.

Futurum Group projects CPU-to-GPU ratios in AI clusters are climbing back toward 1:1 and forecasts CPU market growth reaching 34.9% by 2029, outpacing GPUs and XPUs. Bank of America estimates the total CPU market could more than double from $27 billion in 2025 to $60 billion by 2030.

This is not speculative. AMD has said publicly that CPU demand is exceeding expectations, driven specifically by agentic AI applications. Intel has acknowledged it misjudged the demand trajectory and is reallocating wafer capacity from client to server. The supply side did not see this coming.

Agents are digital workers, and digital workers need compute

The important framing here goes beyond GPU idle cycles and orchestration overhead. Think about what agents actually do once the LLM reasoning step finishes.

An agent books a flight, processes an invoice, queries a CRM, compiles a report, schedules a meeting. The inference portion of that workflow runs on GPUs or accelerators. But the actual work the agent executes afterward, hitting APIs, interacting with enterprise applications, reading and writing to databases, moving data through web services, all of that runs on general-purpose compute.

The world's enterprise software stack is not getting rewritten for accelerators. Email servers, database engines, ERP systems, CRM platforms, none of these are GPU-native, and nobody is going to make them GPU-native. But agents are going to be calling into these systems at machine speed, millions of transactions where a human used to generate dozens.

This is the insight that reshapes the demand model. Agents do not just need CPUs for coordination. They generate entirely new CPU workloads by doing real work, at digital speed, across systems that were built for CPUs and will remain on CPUs. That means net-new demand, not a reshuffling of existing infrastructure but an entirely new compute tier that did not exist before agentic AI.

Arm puts a number on it in today's press release. Data centers are expected to require more than 4x the current CPU capacity per GW to support agent-driven applications. That is not a GPU story. That is a CPU story.

The Arm AGI processor, from blueprints to silicon

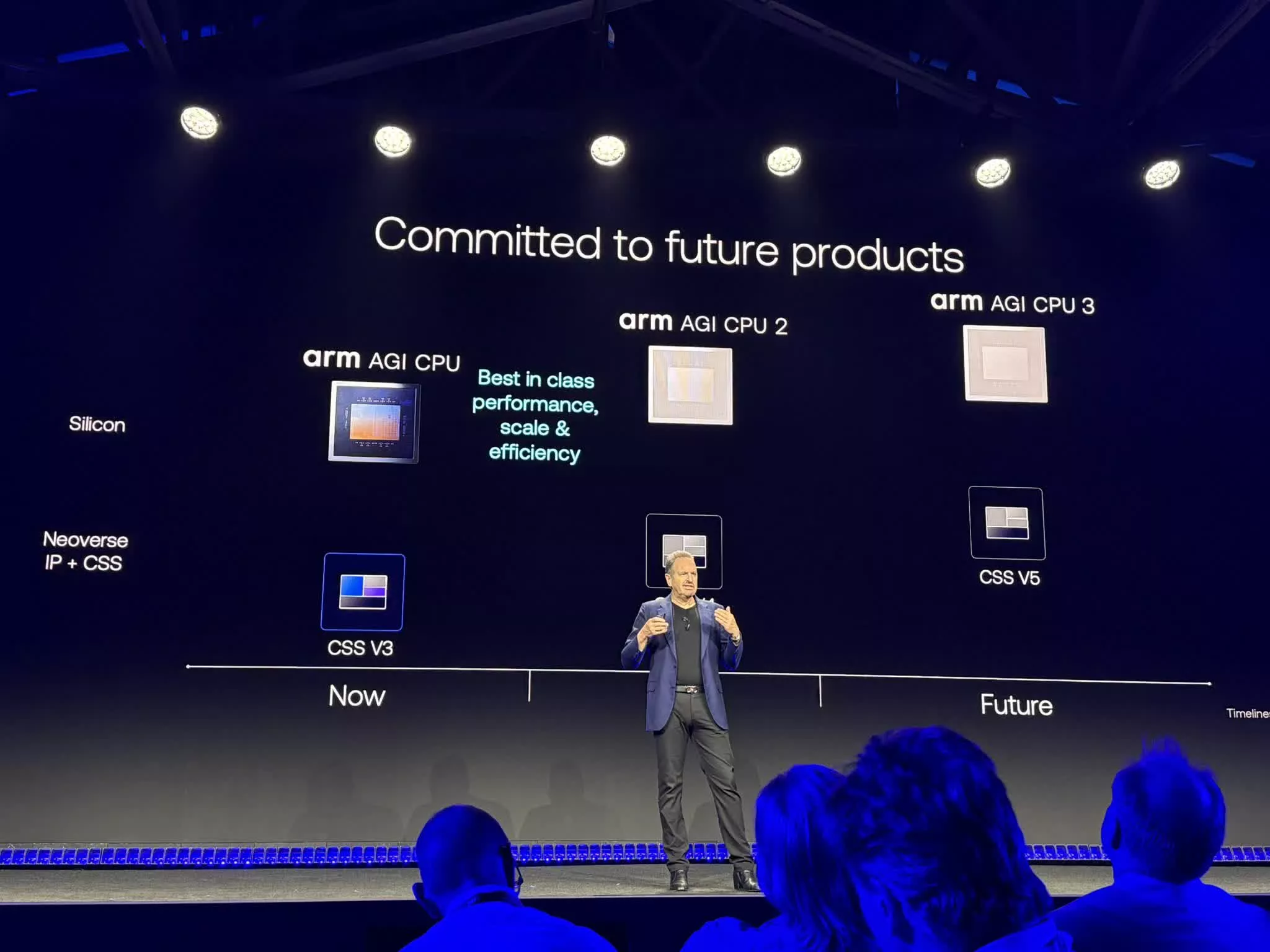

Into that demand inflection, Arm is making the biggest strategic bet in its history. The Arm AGI processor is the first product from a new data center silicon product line, with follow-on generations already committed.

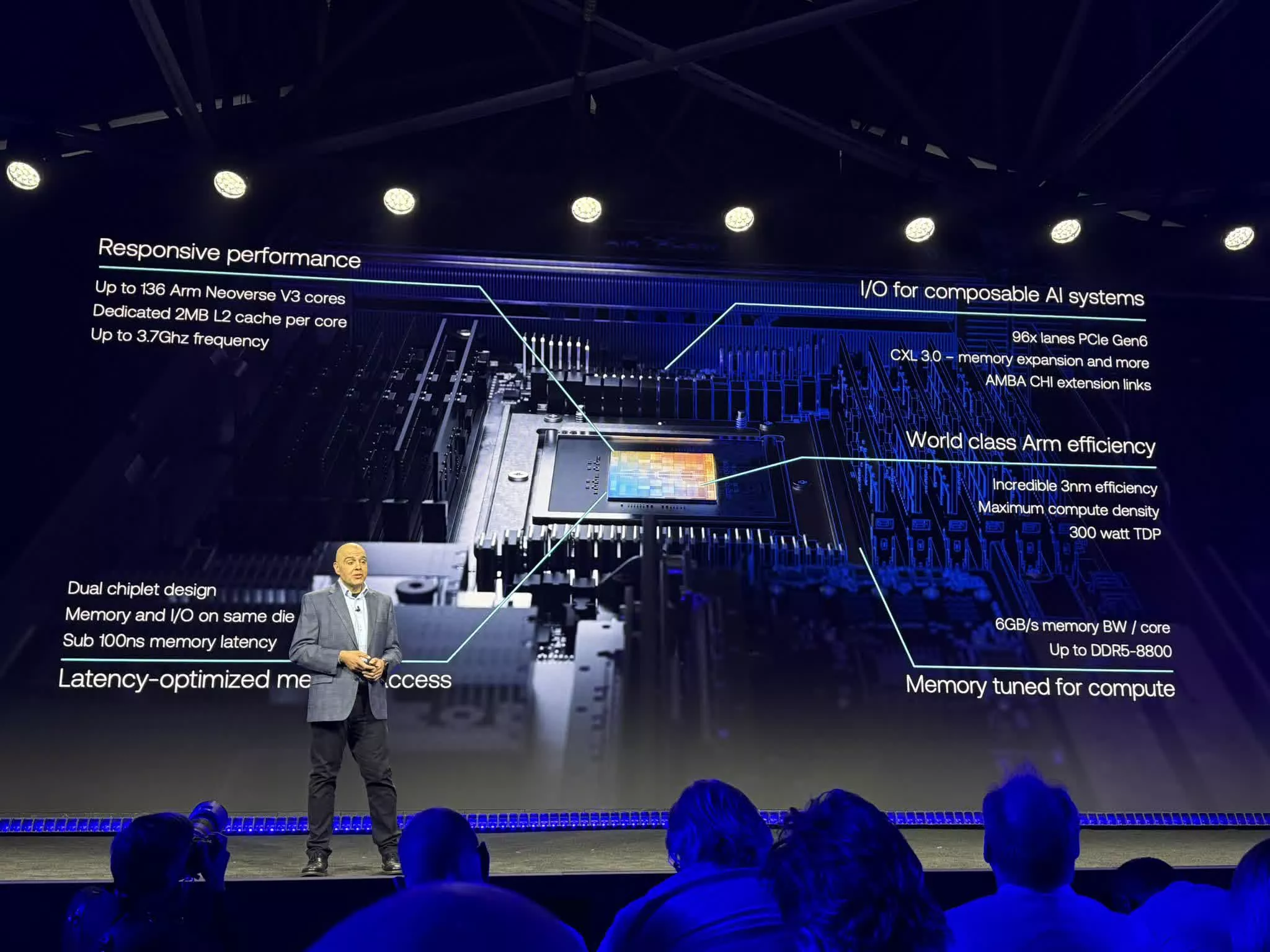

The specs are serious. Up to 136 Neoverse V3 cores running at up to 3.7GHz in a dual-chiplet design. Dedicated 2MB L2 cache per core. 6GB/s memory bandwidth per core at sub-100ns latency with DDR5-8800 support and up to 6TB memory capacity per chip. 96 lanes of PCIe Gen6, CXL 3.0 for memory expansion, and AMBA CHI extension links. All at a 300-watt TDP, manufactured by TSMC on 3nm.

Every core runs a dedicated thread with no SMT and no throttling under sustained load. That matters for agentic workloads where deterministic, predictable per-task performance across thousands of parallel operations is the design target, not peak single-thread burst.

The rack-scale story is where it gets especially interesting to data centers. The reference server is a 1OU, 2-node design packing 272 cores per blade. Thirty blades in a standard air-cooled 36kW rack deliver 8,160 cores. Arm has partnered with Supermicro on a liquid-cooled 200kW configuration housing 336 AGI CPUs for over 45,000 cores.

In these configurations, Arm claims more than 2x performance per rack versus the latest x86 systems, achieved through compounding architectural advantages: class-leading memory bandwidth that maintains throughput under sustained load, high single-threaded Neoverse V3 performance, and more usable threads doing more work per thread (obviously these kinds of claims need a lot of third-party validation, and its something Signal65 is already looking at).

Arm is also contributing the 1OU reference server design, firmware, and diagnostic tooling to the Open Compute Project under the DC-MHS standard. Arm is not just selling a chip, it is trying to seed an open platform ecosystem.

The customer list tells a strategic story. Meta is the lead partner and co-developer, deploying AGI CPU alongside its custom MTIA accelerator with a multi-generation roadmap commitment.

Additional confirmed deployment partners include Cerebras, Cloudflare, F5, OpenAI, Positron, Rebellions, SAP, and SK Telecom. OEM systems from ASRock Rack, Lenovo, and Supermicro are available to order now. And more than 50 ecosystem companies, including AWS, Broadcom, Google, Marvell, Micron, Microsoft, Nvidia, Samsung, SK Hynix, and TSMC, have issued supporting statements.

OpenAI offered a particularly telling quote, describing the AGI CPU as "strengthening the orchestration layer that coordinates large scale AI workloads." That is exactly the use case profile this chip was built for.

This was not a cold start

Arm did not show up to the data center market today with a blank resume. All three major hyperscalers already run Arm Neoverse-based server processors. AWS Graviton is now in its fifth generation with 192 Neoverse V3 cores. Microsoft Cobalt 200 is built on CSS V3. Google Axion runs on Neoverse N3. Nvidia Vera, launched at GTC, is also Neoverse-based. Over 1 billion Neoverse cores are deployed in data centers globally.

All three major hyperscalers already run Arm Neoverse-based server processors.

Independent performance data validates the trajectory. In Signal65 Lab Insight testing, Neoverse-powered AWS Graviton4 delivered up to 168% higher token throughput than AMD EPYC and 162% better performance than Intel Xeon in LLM inference testing with Meta Llama 3.1 8B.

In database workloads, Graviton4 handled up to 93% more operations per second than x86-based instances. The Arm architecture is already winning performance benchmarks in the cloud. The AGI CPU brings that to a direct-sold product.

This also means that rather than hearing about a new Arm architecture design and having to wait years to see it productized, that timeline could move up substantially, effectively increasing the competitive cadence for Arm vs x86 alternatives.

The financial logic is straightforward. Under the IP licensing model, Arm collects 1-2% royalties per chip sold. By selling finished silicon directly, Arm captures the full chip margin. That is a potentially huge revenue increase per unit. Speculation about Arm making this move has circulated for over a year. What makes the timing notable is that agentic AI creates net-new demand that does not directly cannibalize the existing licensee business.

Competitive ripple effects

The current server CPU market splits roughly 60% Intel, 24% AMD, 6% Nvidia Grace, with the remainder spread across Arm ecosystem customs. That mix is about to get more complicated.

Intel is under the most pressure. Already losing share to AMD, Intel has cancelled its mainstream Diamond Rapids-SP platform, leaving its highest-volume data center segment without a new generation until roughly 2028. Of all the incumbents, Intel has the least room to absorb a new entrant.

AMD has seen tremendous growth in the data center over the past several years, and EPYC Turin is genuinely competitive. But the concern here is whether that growth trajectory could slow under added competitive pressure. Meta has been one of the biggest AMD data center CPU customers. Meta co-developing a multi-generation CPU roadmap with Arm is a direct signal about where that infrastructure is heading. The power efficiency math, 2x performance per rack at lower power, is a structural challenge for x86 economics over time.

Nvidia is positioned as a partner, with Jensen Huang offering a supporting quote in the press release. But Grace and Vera could easily have been seen as the default high-performance Arm CPU for the data center. That assumption is now in question. The AGI CPU competes for the same orchestration and control plane workloads that Vera targets. The question going forward is whether Grace and Vera get scoped to Nvidia-specific infrastructure buildouts, paired with Blackwell GPUs in NVL racks, rather than serving as a general-purpose data center CPU platform.

Qualcomm is an interesting player to watch. Their data center ambitions have focused more on NPU-based accelerators than standalone CPUs, and the company has shown off rack-scale inference implementations with decent opening momentum. The AGI CPU does not directly compete with that approach, but it reshapes the conversation about who provides the CPU fabric around those accelerators.

The licensee risk for Arm is real but managed. SoftBank acquiring Ampere for $6.5 billion effectively neutralized the most direct conflict. Hyperscaler custom chip designers at AWS, Microsoft, and Google are unlikely to be disrupted since they design their own silicon on Arm IP.

The broader perspective is that there is still plenty of room for all of these players. The market is expanding, not just reshuffling. For Arm starting from 0% share in direct silicon sales, and even Qualcomm starting from near-zero in data center CPU presence, every bit of growth is a positive. They are not defending share. They are taking it. And the agentic demand wave means the pie itself is growing.

What this means going forward

Three forces are converging simultaneously. Agentic AI is creating a new demand category where CPUs are the primary compute element, not a sidecar. Supply has not anticipated this demand, with constrained wafer capacity, stretched lead times, and rising prices across the industry. And Arm's entry as a direct silicon vendor with a purpose-built product validates the opportunity while reshaping the competitive landscape.

The most interesting strategic question is not whether the Arm AGI CPU succeeds in isolation. It is whether this moment represents a permanent shift in how we think about data center architecture, from GPU-centric to genuinely heterogeneous, with CPUs playing a far larger role than the training-era mindset assumed.

For enterprises and cloud operators, the practical takeaway is simple. Agent-driven workloads are going to need more CPU capacity than anyone planned for, and the options for sourcing that capacity just expanded significantly.

This is not the end of GPU dominance. Training and large-scale inference remain GPU territory. But the work that agents do beyond the model is CPU work. And the industry is finally building for that reality.