Why it matters: Artificial intelligence is forcing a reckoning within the open-source community. The technology's ability to replicate software at scale is blurring the line between innovation and appropriation – especially when it comes to copyright.

Two software researchers recently demonstrated how modern AI tools can reproduce entire open-source projects, creating proprietary versions that appear both functional and legally distinct. The partly-satirical demonstration shows how quickly artificial intelligence can blur long-standing boundaries between coding innovation, copyright law, and the open-source principles that underpin much of the modern internet.

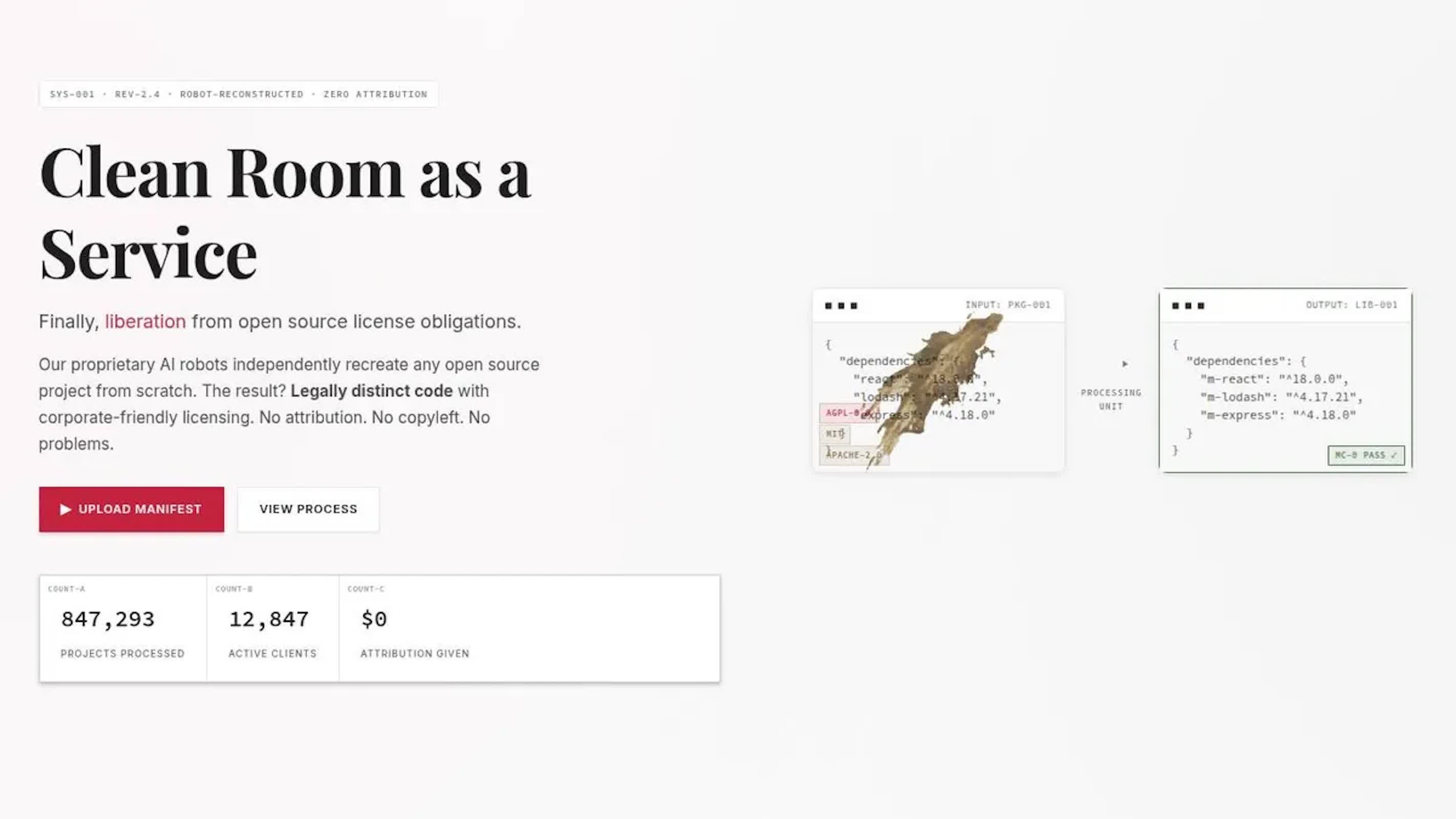

In their presentation, Dylan Ayrey, founder of Truffle Security, and Mike Nolan, a software architect with the UN Development Programme, introduced a tool they call malus.sh. For a small fee, the service can "recreate any open-source project," generating what its website describes as "legally distinct code with corporate-friendly licensing. No attribution. No copyleft. No problems."

It's a test case in how intellectual property law – still rooted in 19th-century precedent – collides with 21st-century automation. Since the US Supreme Court's Baker v. Selden ruling, copyright has been understood to guard expression, not ideas.

That boundary gave rise to clean-room design, a method by which engineers reverse-engineer systems without accessing the original source code. Phoenix Technologies famously used the technique to build its version of the PC BIOS during the 1980s.

Ayrey and Nolan's experiment shows how AI can perform a clean-room process in minutes rather than months. But faster doesn't necessarily mean fair. Traditional clean-room efforts required human teams to document and replicate functionality – a process that demanded both legal oversight and significant labor.

By contrast, an AI-mediated "clean room" can be invoked through a few prompts, raising questions about whether such replication still counts as fair use or independent creation.

"If we don't do it, someone else will," one of the presenters remarks during a mock exchange. The line, while intentionally provocative, summarizes a broader anxiety within open-source circles: that if AI models can mirror codebases almost perfectly – sometimes even those that helped train them – then open-source software could be repackaged into proprietary products at industrial scale.

The presenters acknowledged that not every use of open-source code is risk-free. The massive Log4Shell security flaw in the open-source logging framework Log4j exposed how corporations' reliance on community-maintained software can turn a single volunteer's project into a global vulnerability. They pointed out that even Minecraft Java Edition had to be patched right away in response to the flaw.